Authors

Imagine telling Claude to preprocess your unstructured data, making your documents RAG-ready. Or seamlessly asking it questions about any document—without worrying about file type limitations. How would you make that possible? Unstructured provides all the tools you need for data processing, but how can Claude Desktop, Cursor, or your LLM agent access and use them? That’s where the Model Context Protocol (MCP) comes in.

In this tutorial, we'll walk through building an MCP server that integrates with the Unstructured API. You'll learn the fundamentals of MCP architecture, explore Unstructured’s capabilities, and follow a step-by-step implementation guide. While this tutorial doesn’t cover full MCP integration, it provides a solid foundation to get you started.

What is MCP?

Model Context Protocol (MCP) is an open protocol that standardizes how applications provide context to LLMs. Think of it as a common language that helps LLMs communicate effectively with your applications. MCP is particularly powerful because it:

- Standardizes context delivery to LLMs

- Enables building complex workflows and agents

- Promotes reusability and interoperability

- Simplifies the integration with different APIs

Unlike other integration methods that might require custom implementations for each LLM model, MCP offers a standardized approach that will work across different clients. Once you build your MCP server, you can use it from Claude, or Windsurf, or any custom LLM tool or agent.

Why Unstructured API?

Unstructured API is a powerful interface to the Unstructured Enterprise ETL product that is designed for processing and managing unstructured data for GenAI applications. The API provides all of the capabilities that are currently available via Unstructured UI, for example:

- Managing document processing workflows

- Handling multiple source and destination connectors

- Extracting and structuring content from various document formats

By building an MCP server over Unstructured API you can provision Unstructured data for your GenAI systems by simply telling your LLM what to do in natural language!

Imagine asking your LLM, in English, for GenAI ready data:

- "Convert data from this S3 bucket to JSON"

- "Run this Unstructured workflow: transform, chunk, and embed the data in my Google Drive with text-embedding-3-small and push it to Pinecone"

Once built, you can integrate your MCP server with Claude Desktop, Cursor, Windsurf or any other client to control the entire data preprocessing as if you were chatting with your team mate.

Isn’t it exciting? Let's walk through how you can build your own MCP server to expose some of the Unstructured API functionality to LLMs, LLM agents and other MCP clients.

Note: We are currently working on our Unstructured MCP server implementation, and you can follow our progress in this GitHub repo. We’ll use this repo as a reference for this tutorial.

Project Setup

Let's start by setting up our project. You’ll need to set up your uv environment first, and add the required dependencies - see README.md.

You will also need to create a .env file with your Unstructured API key:

To start your free 14-day free trial, sign up for Unstructured today and follow these steps to access your API key to use Unstructured API.

Here's the project structure we'll be working with. Keep in mind that the structure in the linked GitHub repo may differ as this is a project that is being actively developed:

Building the MCP Server

Core MCP Concepts

In general, MCP servers can provide three main types of capabilities:

- Resources: File-like data that can be read by clients

- Tools: Functions that can be called by the LLM

- Prompts: Pre-written templates that help users accomplish specific tasks

For our Unstructured MCP server we will only need to build tools for managing document processing workflows and connectors through the Unstructured API.

To build the core of this server we’ll use FastMCP which significantly simplifies MCP server implementation. With the FastMCP class, you can use Python type hints and docstrings to automatically generate tool definitions, making it easy to create and maintain MCP tools.

Core Components

- FastMCP Server: The main server implementation using the FastMCP class

- Application Context: MCP servers use a lifecycle management system to handle initialization, state management, and cleanup.

- MCP Tools: A collection of tools for interacting with the Unstructured API

Server Implementation

Here's our main server implementation:

Application context helps to initialize resources once, share across requests, clean them properly on server shutdown, and prevent memory leaks.

MCP Tools

Tools are the core functionality providers in an MCP server. Let's explore how to implement them effectively. When building an MCP server with FastMCP we can implement tools for interacting with the Unstructured API by decorating normal Python functions with @mcp.tool(). Here’s an example of a tool that can call Unstructured API to list available workflows:

Running the Server

Once ready, you can run the server with the following command:

If you want to make this MCP server available in your Claude Desktop, go to ~/Library/Application Support/Claude/ and create a claude_desktop_config.json file.

In this file add the following:

Restart Claude Desktop application, and you should now be able to ask Claude to interact with Unstructured.

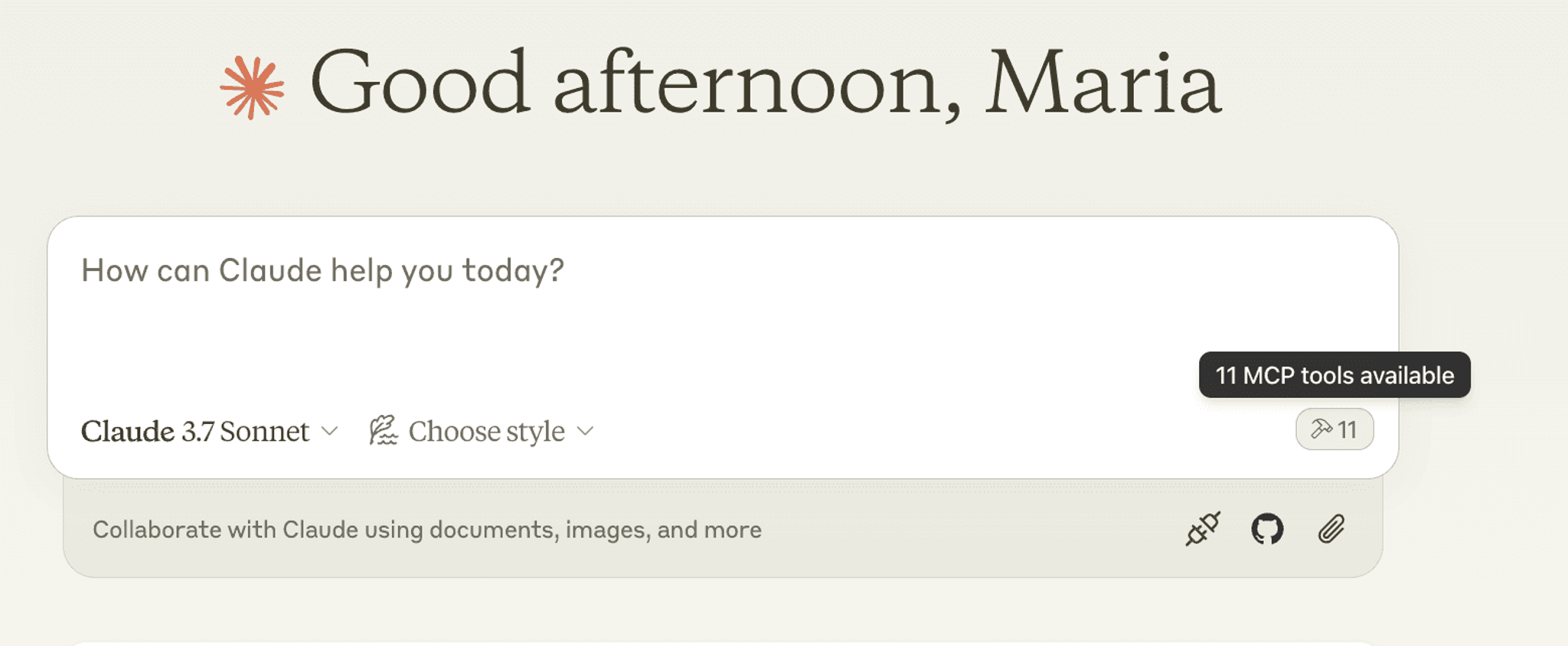

You should see a tiny hammer icon indicating how many tools you have available:

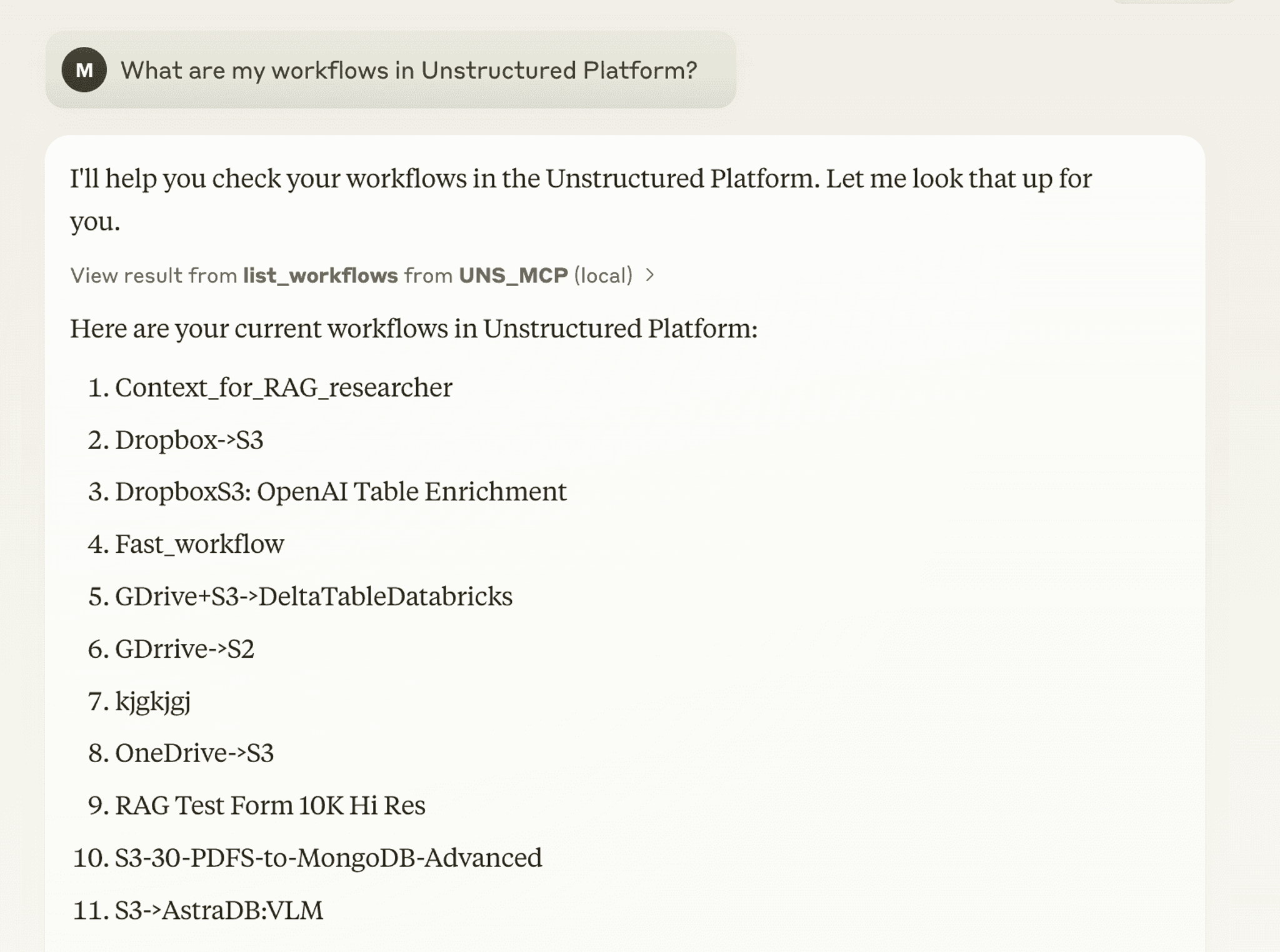

Now you can use what you built:

Type in your requests, questions, or instructions and you are ready to go!

Best Practices

- When you're working with FastMCP, it's a good idea to take full advantage of its built-in context management—it really helps organize your code.

- When it comes to organizing your code, a modular design is the way to go. Keep your connectors in separate modules so everything is more manageable and reusable. Keep your tools focused on one task to keep things simple and easy to maintain.

- Don’t forget to validate inputs to make sure no harmful data gets through, and always be careful with sensitive data to ensure it's handled securely at every stage of your app.

Conclusion

Building an MCP server with the Unstructured API unlocks a powerful new way to preprocess unstructured data for GenAI applications. By leveraging MCP, you can seamlessly integrate Unstructured’s document processing capabilities into Claude Desktop, Cursor, Windsurf, or any other LLM-powered client. Instead of manually handling data transformations, you can simply tell your LLM what you need in natural language—bridging the gap between AI and enterprise-grade data processing.

If you're excited about this integration, we encourage you to build your own MCP server using the approach outlined in this guide. Whether you want to customize tools, expand workflows, or streamline how your LLM interacts with data, the possibilities are vast.

To get started, check out our GitHub repo, where we're actively developing an Unstructured MCP server. Use it as a reference, contribute, or adapt it for your own use cases.

We can’t wait to see what you build. Let us know how you’re using MCP with Unstructured API—happy coding! 🚀

FAQ

What can you do with the Unstructured API through an MCP server?

The Unstructured API exposes the full capabilities of the Unstructured Enterprise ETL platform, including managing document processing workflows, configuring source and destination connectors, extracting structured content from PDFs, HTML, PPTX, images, and more, and automating end-to-end data pipelines. When wrapped in an MCP server, all of these operations become accessible to LLMs through natural language, so you can instruct Claude or another client to chunk, embed, and push data to a vector store like Pinecone without writing any additional code.

Does Unstructured support multiple source and destination connectors through the API?

Yes. The Unstructured API supports a range of source connectors (such as S3 and Google Drive) and destination connectors (such as Pinecone), mirroring what is available in the Unstructured UI. This means an MCP server built on top of the API can orchestrate full ingestion pipelines, from pulling raw documents out of cloud storage to delivering chunked, embedded output to a vector database, all triggered by a single natural language instruction.

What is the Model Context Protocol (MCP)?

MCP is an open protocol that standardizes how applications provide context to large language models. Rather than building a custom integration for each LLM, you build one MCP server and it works across any compatible client, including Claude Desktop, Cursor, and Windsurf.

What is the difference between an MCP server and a traditional API integration?

A traditional API integration requires each LLM or application to implement its own custom logic for calling your service. An MCP server exposes that same functionality through a standardized protocol, so any MCP-compatible client can discover and invoke your tools without additional custom code on the client side.

What is FastMCP and why is it useful for building MCP servers?

FastMCP is a Python library that simplifies MCP server development by using standard Python type hints and docstrings to automatically generate tool definitions. Instead of manually writing protocol-level boilerplate, you decorate regular Python functions with @mcp.tool() and FastMCP handles the rest, making it faster to build, easier to read, and simpler to maintain.