Benchmarks tell the full story.

We benchmarked Unstructured against Reducto, LlamaParse, Docling, Snowflake, Databricks & NVIDIA across 1,000+ enterprise pages. See the data.

Benchmarking the Landscape

We could tell you we're the best document parsing solution. Instead, we'll show you the data and let you decide.

How We Evaluated

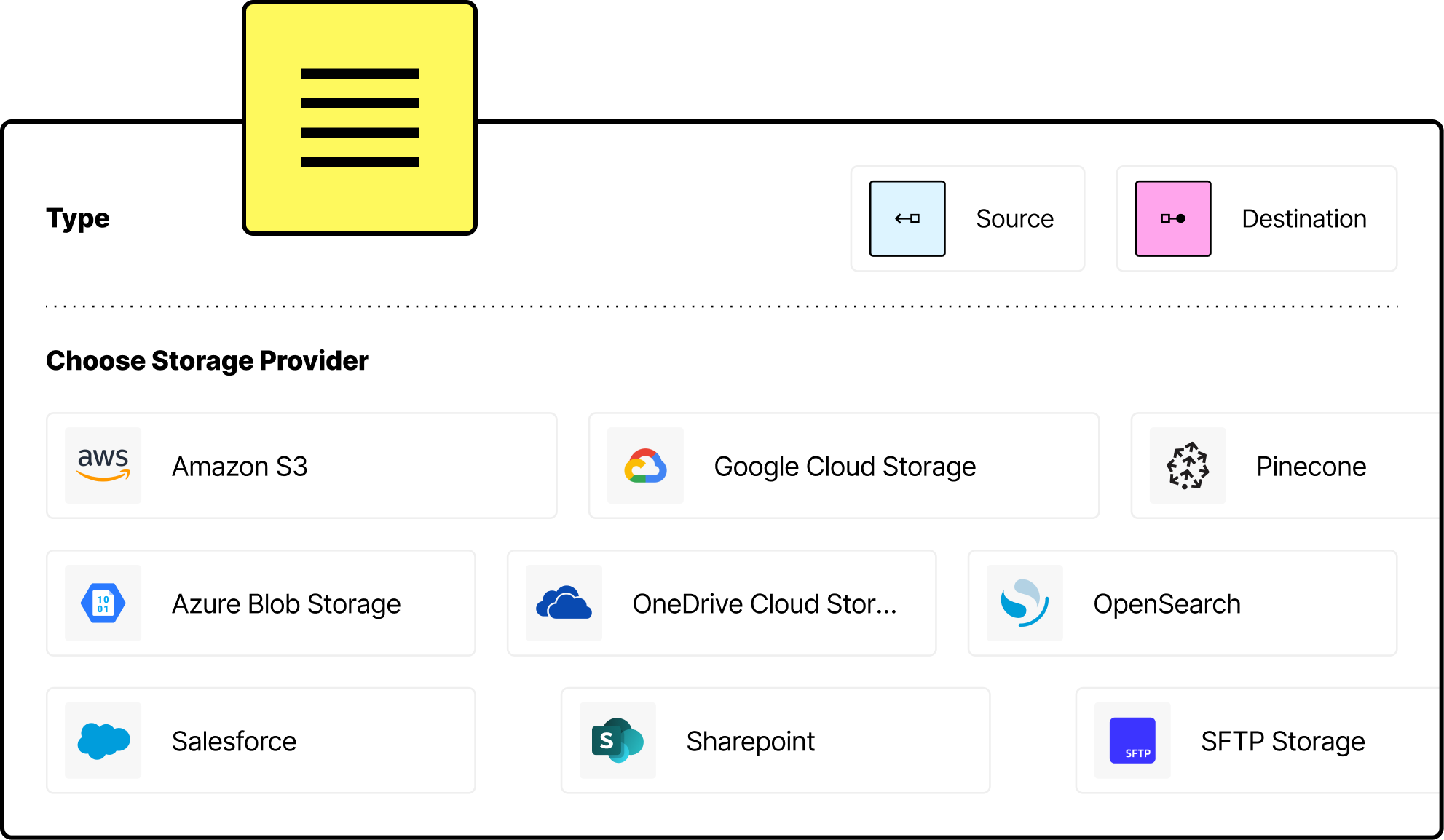

We benchmarked Unstructured against leading document parsing tools—including Reducto, LlamaParse, Docling, Snowflake, Databricks, and NVIDIA—using a real-world enterprise dataset of over 1,000 pages. The documents reflect messy production reality: scanned invoices, complex layouts, nested tables, handwritten notes, and industry-specific formats. We measured performance across four key dimensions:

Landscape Across The Tools

For Unstructured, Reducto, and Docling, we tested multiple pipeline configurations. For the other tools, we used their default configurations.

Overall Outcome

| System | Adjusted CCT | Tokens Added | Element Alignment | Table Cell Level Content Accuracy | Table Cell Level Spatial Accuracy |

|---|---|---|---|---|---|

| #1 0.880 | #2 0.051 | #4 0.574 | #1 0.820 | #1 0.813 |

| 0.809 | 0.053 | 0.417 | 0.615 | 0.623 |

| 0.648 | 0.070 | 0.339 | 0.559 | 0.651 |

| 0.792 | 0.102 | 0.608 | 0.556 | 0.583 |

| 0.812 | 0.124 | 0.595 | 0.708 | 0.706 |

| 0.835 | 0.069 | 0.277 | 0.522 | 0.578 |

| 0.716 | 0.135 | 0.599 | 0.657 | 0.716 |

| 0.792 | 0.071 | 0.421 | 0.687 | 0.710 |

| 0.764 | 0.161 | 0.452 | 0.716 | 0.750 |

| 0.680 | 0.257 | 0.430 | 0.627 | 0.644 |

| 0.763 | 0.167 | 0.440 | 0.705 | 0.710 |

| 0.742 | 0.044 | 0.449 | 0.678 | 0.686 |

The Full Picture

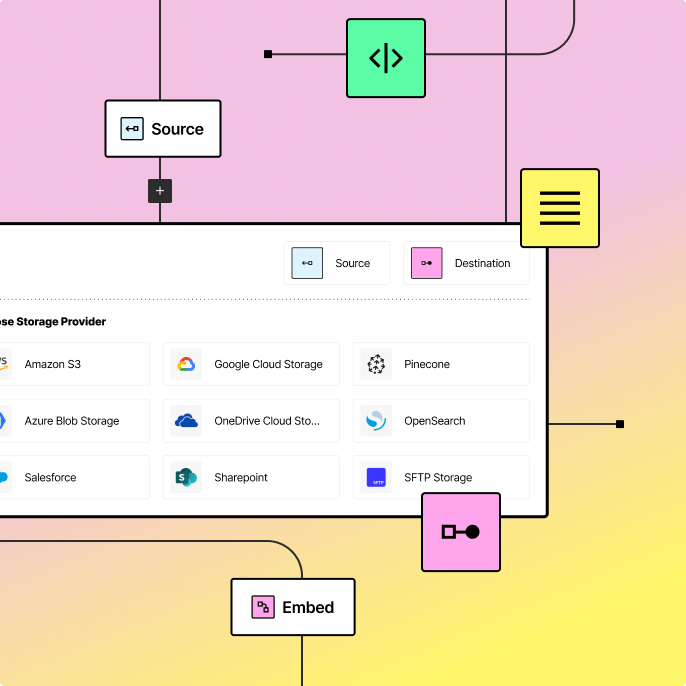

To provide complete transparency, we're sharing detailed results for every pipeline configuration we tested. The charts below also show how different Unstructured pipelines each use different partitioning and enrichment strategies perform across the above SCORE metrics.

What We Found

The results across pipelines and metrics reveal a few patterns worth understanding before you dig into the charts.

Our Detailed Results

Each pipeline below uses a different combination of partitioning strategy and enrichments. Use these charts to find the best fit for your documents and use case

Adjusted CCT by Pipeline

The core measure of text accuracy. Unlike basic string matching, it accounts for formatting differences so a pipeline that outputs structured HTML and one that outputs plain text can both score well — what matters is whether the actual words were captured.

Tokens Added by Pipeline

Counts words a pipeline generated that were never in the source document. In production AI applications, invented content is often more damaging than missing content — it feeds false information directly into whatever is built on top.

Element Alignment by Pipeline

Documents are made up of different element types — headings, paragraphs, tables, figures. This measures whether a pipeline correctly identifies and consistently classifies those elements. A system that extracts all the text but mislabels what it is loses the document's structure entirely.

Table Cell Level Content Accuracy by Pipeline

Tables are the hardest part of document parsing. This measures whether the text inside each individual cell was extracted correctly — wrong numbers in a financial table are worse than no table at all.

Table Cell Level Spatial Accuracy by Pipeline

Getting cell content right is only half the problem. This measures whether each piece of text landed in the correct row and column. Structure is what makes a table useful rather than just a list of values.

Real-World Data,

Rigorous Evaluation

The benchmarks tell the story. The methodology is open. The data speaks for itself. Try Unstructured, and discover why leading teams rely on Unstructured to power production AI pipelines.

Ready for a demo?

See how Unstructured simplifies data workflows, reduces engineering effort, and scales effortlessly. Get a live demo today.